Shadow Testing a Fraud Vendor Before You Touch Production

The fastest way to make a bad fraud buying decision is to trust a polished demo.

Every vendor can show a clean dashboard, a few good examples, and a latency number that looks comfortable. None of that tells you how the system behaves on your own traffic, inside your own approval flow, with your own mix of customers, missing signals, payout patterns, and operational constraints.

If you are evaluating a fraud vendor seriously, the right next step is not production. It is a shadow test.

What a shadow test actually is

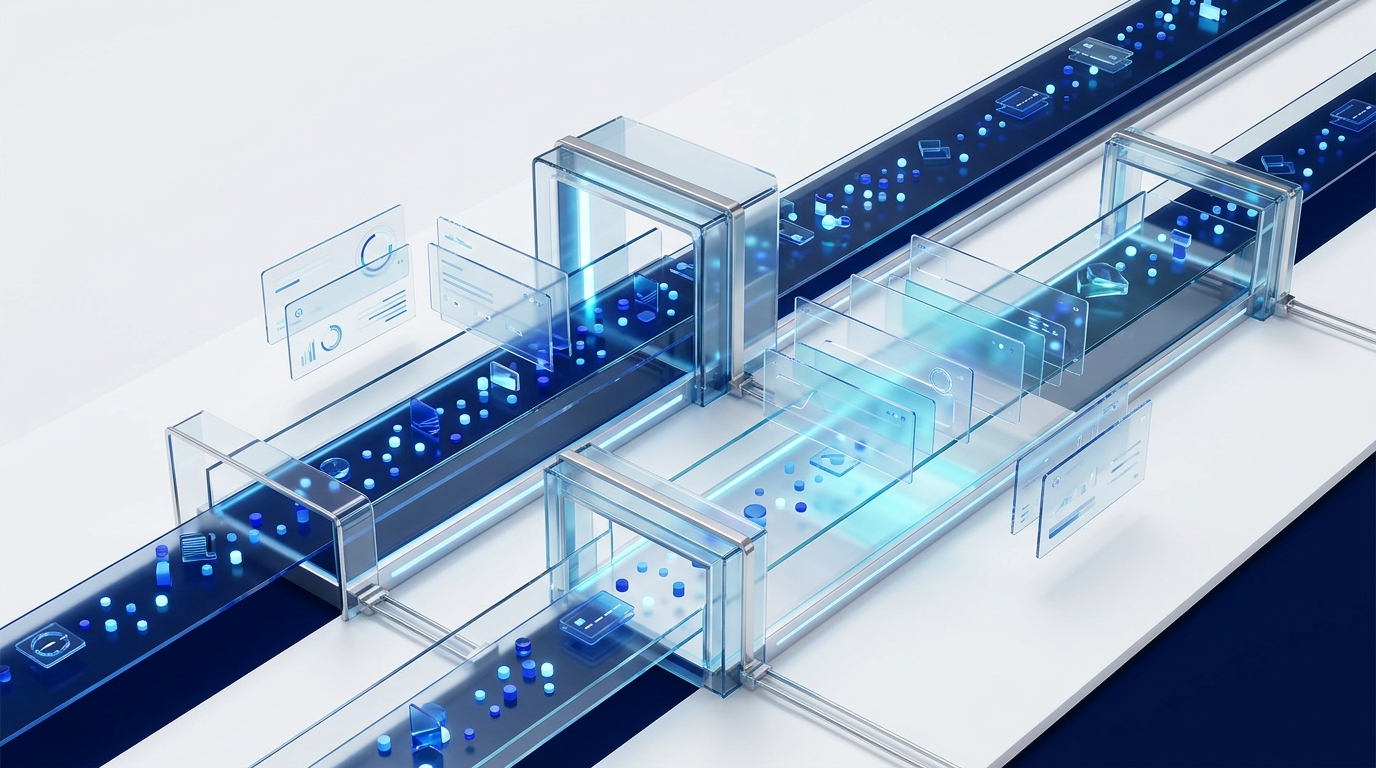

A shadow test means the vendor scores your real traffic in parallel without changing production behavior. Your current stack still makes the live decision. The candidate system watches the same events and produces its own outputs so you can compare them safely.

That matters because buying a fraud product is not only about model quality. It is about whether the score distribution, explanations, latency, and queue impact make sense in your operating model.

Why feature checklists and demos are not enough

The weak part of most evaluations is that teams compare capabilities instead of behavior. “Uses AI,” “has device intelligence,” or “supports rules plus ML” are not useful discriminators once the vendor is inside the shortlist.

The practical questions are different:

- Does the system stay fast enough when traffic is real?

- Do the explanations help analysts move faster or just sound impressive?

- Do false positives cluster in ways that create extra review work?

- Do the scores line up with your actual fraud patterns, not the vendor's favorite demo set?

What to compare during the test

- P50, P95, and P99 latency.

- Score distribution by flow, not just one global average.

- Top explanations or reasons on flagged cases.

- Precision and false-positive patterns at candidate thresholds.

- Analyst feedback on whether the outputs are usable.

If you skip any of those, you usually end up learning too late that the “good” pilot was never operationally good in the first place. For the broader evaluation checklist, start with Fraud Detection API: What to Look For in 2026.

How long it should run

Long enough to capture real variability. If you run a shadow test for a day or two, you mostly learn how the system behaves on a clean slice of traffic. That is not enough.

You want enough volume to see quiet periods, peak periods, incomplete enrichment, weird edge cases, and at least a few analyst-reviewed outcomes. In most teams, that means at least one to two weeks of real traffic.

One concrete failure mode

A vendor can look excellent in the first meeting: strong average latency, modern UI, and plausible reasons on a few example cases.

Then the shadow run starts. On payout traffic, the scores compress into a narrow range, the reasons are generic, and the tail latency becomes erratic whenever one enrichment provider slows down. None of that was visible in the demo. All of it matters in production.

If you want the latency part of that problem in isolation, read Real-Time Fraud Scoring Latency: What 47ms Actually Means. If you want to know what analysts should actually see, the SHAP article goes deeper: SHAP Explainability for Fraud Ops.

Red flags that should kill the pilot

- The vendor avoids showing score distributions on your own traffic.

- The reasons behind decisions are too generic to be operationally useful.

- The system performs well only after heavy threshold tuning that hides weak base behavior.

- Latency is framed only as an average and the tail is ignored.

- Ops feedback is treated as secondary to offline model metrics.

What a good outcome looks like

A good shadow test ends with something simple: you understand how the new system behaves, what tradeoffs it introduces, and what production changes it would justify.

That is a much higher standard than “the demo looked good,” but it is the right one. Fraud infrastructure should earn trust on your traffic before it touches your approvals.

About Riskernel

Riskernel is built to be evaluated in a shadow test first: real traffic, real latency, and explanations your ops team can actually use. If you want to compare decision quality against your current stack, start there. Get early access.